|

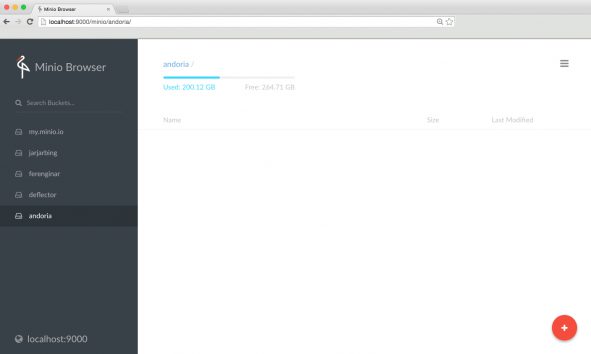

create additional users or policies and assign different policies for different users. Using mc you can do a bunch of other things. Then we're setting policy to download to make it possible to read files without additional auth, basically public read. However, apart from MinIO you can also install and configure any other S3-compatible object storage service as the destination for Kubernetes workload backups. Than we're making a bucket in s3 host named blog. You see that we're giving it URL (using Docker service name) and access and secret keys that have rights to change configuration. Then we configure new host (in real life applications you can use mc to access multiple MinIO servers). In the entrypoint sleep ensures we give some time to MinIO to start before we start configuring. In this example service named createbuckerts is responsible to create buckets we need and die. Tool is mc and you can use it to configure local or remote MinIO servers. To be able to use full potential of it you need tool shipped as a separate executable to configure it. MinIO provides only storage infrastructure and basic Web UI. It will be available on port 9000 and you can use access and secret keys to access programmatically or via MinIO web UI. It will start a MinIO server using the official Docker image and make it store files in the s3 volume (you can also use local directory if you want to inspect files manually).

Mc config host add s3 "test-s3-access-key" "test-s3-secret-key" & Here's very simple docker compose configuration that can bootstrap you: version: '3.5' It means you can run it on top of your infrastructure and still be able to use a lot of ready made libs available for S3. in the plane), you don't have additional costs, you can ship dev configuration in the source code, you can write tests that will create and destroy anything you need.īut not just that, MinIO is production ready software. The answer is simple, you can run a dev environment totally independent on your internet connection (eg. You can have your app running locally and still use a bunch of services in the cloud, so you ask why do you need it.

Tool named MinIO provides an S3 compliant API that gives you ability to have an additional piece of puzzle running locally during development. As my MinIO instance is started with the rest of the stack with the endpoint passed into my app on. The issue is the framework that Im using, uses the smithy/middleware-endpoint API, which requires a fully qualified URL. If you're using AWS for your cloud infrastructure you most likely use S3 as an object storage. Im trying to access the Minio S3-API endpoint from within my container, but my app cant resolve the container name. There are parts of your stack that can be run locally and others that can't.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed